GSMA Launches "AI Calling Native" Application Experience Specifications at MWC 2026

Barcelona, Spain — At the 5G Futures Summit held by GSMA during Mobile World Congress Barcelona 2026, GSMA released an official white paper titled “Gigauplink, Deterministic Latency, and Network Evolution for the Mobile AI Era”. The report examines trends in network development and evolution, application scenarios, and business models for native voice services from operators in the mobile AI era. The paper also outlines specifications for evaluating the AI Calling experience to enable operators to build networks focused on voice service quality and significantly enhance user experience.

According to the report, thanks to the synergy between 5G-Advanced (5G-A) and artificial intelligence, mobile communication has entered the mobile AI era. Operators are now transforming native voice services, transitioning from conventional voice calls to AI voice calls. By integrating AI algorithms and computing capabilities into IMS voice networks, conventional calling services have evolved into more advanced and innovative offerings. This development will deliver a new generation of calling experiences that are stable, HD-quality, visual, intelligent, and efficient. The emergence of new services such as AI immersive calling and AI interactive calling brings new demands on network connectivity and AI capabilities.

In the report’s discussion, AI-based noise reduction is presented as a primary application in AI immersive calling. By leveraging AI algorithms to eliminate noise in various situations, operators can deliver clearer call quality and more immersive communication experiences to users. AI-based noise reduction algorithms can be applied in various conditions, including offices (noise level >40 dB), highways (noise level >60 dB), and construction sites (noise level >80 dB). With this technology, users can enjoy high-quality voice services without depending on specific terminal devices. Additionally, real-time AI-based translation serves as a primary example of AI interactive calling. With enhanced voice network capabilities, language barriers can be overcome. AI Calling services facilitate voice transcription or translation directly during video calls. In this way, the service assists business professionals attending international conferences, travellers heading abroad, and individuals with hearing impairments.

The report also emphasises that operators can integrate AI capabilities into native voice services to enhance business models. By paying for subscription services, users can enjoy various AI-based additional features when making regular calls. This supports operators in transitioning from single traffic-based monetisation models to more diverse experience monetisation.

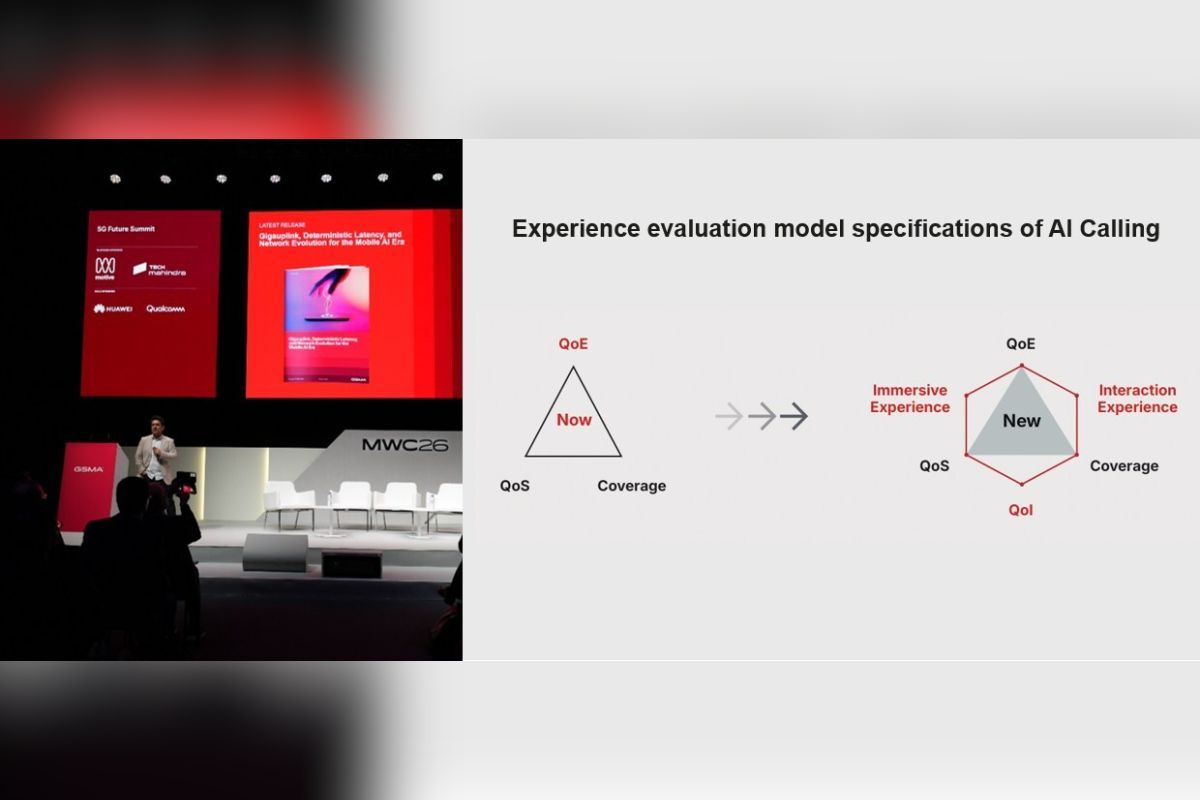

In the AI Calling scenario, measuring user experience becomes a new challenge for operators. Therefore, the report systematically defines an AI Calling experience evaluation model. Beyond the three experience indicators for conventional HD voice services—Quality of Experience (QoE), Quality of Service (QoS), and coverage—the AI Calling evaluation model adds three new indicators: AI immersive experiences, AI interactive experiences, and Quality of Intelligence (QoI). Immersive calls can enhance user experience in basic voice calls, for example through significant improvements in Mean Opinion Score (MOS) and Signal-to-Noise Ratio (SNR). Meanwhile, interactive calls require the network to have new interaction channels, such as Data Channel (DC) and Video Channel (VC), which facilitate additional features such as screen sharing, real-time translation, and interaction with AI agents. The QoI indicator becomes an important parameter for measuring the intelligence level of voice networks. Its measurement includes AI model quality, AI management flexibility, the network’s ability to understand user and network conditions, and the inclusivity of AI services. All these aspects form the important foundation for improving the quality of voice service experience.

Currently, the International Telecommunication Union (ITU) has initiated a project named P.AI-MOS to evaluate user experience in multimodal AI applications. On the other hand, proposals regarding voice experience standards for AI Calling remain in the research stage. To accelerate the development of such experience evaluation models, GSMA, together with industry partners, invites all stakeholders to collaborate in formulating rules that link Key Quality Indicators (KQIs) in AI applications with Key Performance Indicators (KPIs) in networks. This effort also accelerates the development of standards for mobile AI service experiences, whilst providing stronger support for the development of the AI industry in mobile communication.