Generation Z Must Be Cautious: AI Can Expose Anonymous Secondary Accounts

Generation Z faces a potential privacy crisis as artificial intelligence technology becomes capable of exposing the identities behind anonymous secondary accounts on social media platforms.

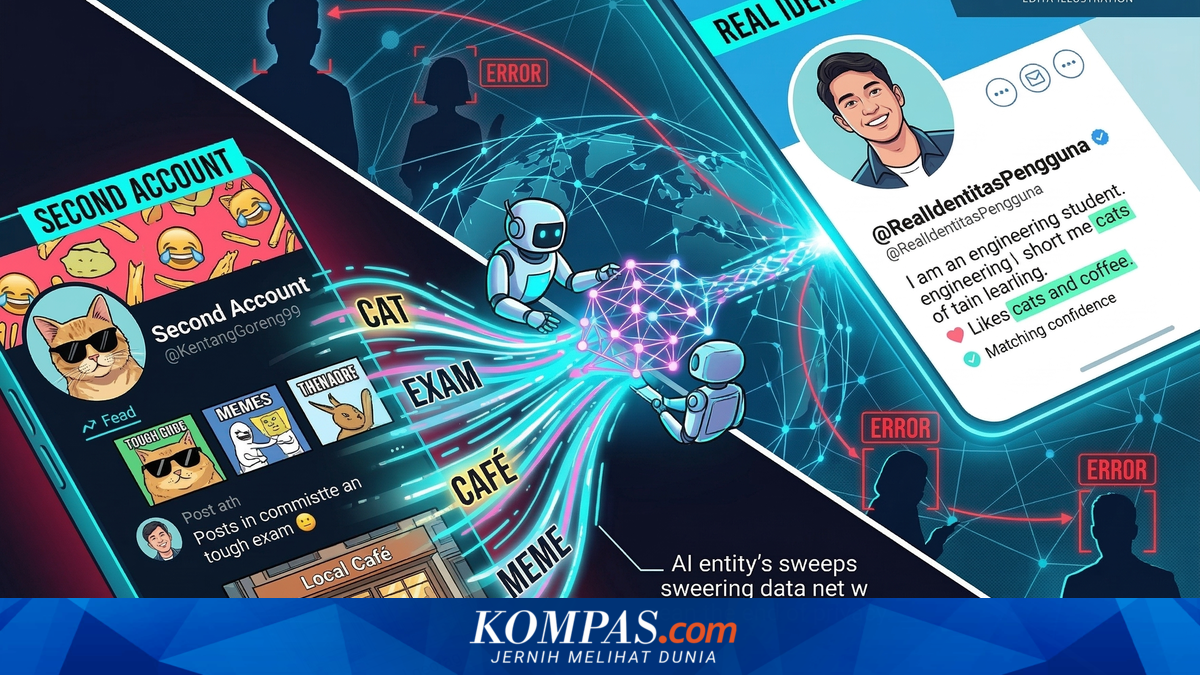

Maintaining secondary accounts on Instagram or TikTok has become an unwritten obligation for Generation Z, typically serving as a space to share personal grievances, post memes, and complain about university work or employment without fear of judgment from family or colleagues. Many users have felt protected by anonymous profile pictures and pseudonymous usernames. However, this security is being undermined by advancing AI technology.

Recent research compiled by KompasTekno from The Guardian has revealed that artificial intelligence can now expose the true identities behind such anonymous accounts with remarkable ease and accuracy. Two AI researchers, Simon Lermen and Daniel Paleka, have highlighted how Large Language Models—the technology underlying modern AI systems—can reveal real identities regardless of concealment efforts.

Large Language Models do not regard whether users hide their real names. Instead, the AI operates by extracting contextual fragments from user posts. The researchers provided a practical example: imagine a secondary account holder complains on TikTok about the difficulty of university examinations, mentions their pet cat’s name in another video, and accidentally posts about a café where they regularly socialise. Without the user realising, AI will scour the entire internet to locate these detail fragments across other platforms. The AI can then assemble this digital puzzle and match the secondary account to the primary account with very high confidence levels.

In more severe cases, the study authors warn that hackers can now conduct highly personalised fraud. Using profiles compiled by AI from secondary accounts, hackers could execute spear-phishing attacks, impersonate friends who know specific secrets, and trick users into clicking malicious links.

Dr Marc Juarez, a cybersecurity expert from the University of Edinburgh, cautioned that AI can penetrate social media boundaries. “This is extremely concerning. This study proves that we must reconsider our privacy practices,” he stressed.

Researchers have also highlighted the risk of misidentification. AI could incorrectly accuse users of owning problematic anonymous accounts simply because they and the anonymous account share the same band preferences or express similar complaints online. “People could later be accused of things they never actually did,” warned one researcher regarding these misidentification risks.

To prevent such scenarios, scientists are urging major social media companies to immediately implement download rate limitations and block automated scraping activities by bots. However, the most effective prevention remains in users’ hands. Professor Marti Hearst from UC Berkeley emphasised that AI can only track users if they share consistent information across both accounts. Users who maintain distinctly different patterns of behaviour and information sharing across their accounts can significantly reduce the risk of identity exposure.